Remember when we all collectively lost our minds because an AI could finish a for loop? We treated GitHub Copilot like a parlor trick, a "neat" assistant that occasionally saved us five minutes of typing. Fast forward to 2026, and the parlor trick has swallowed the stage. The traditional "Hello World" entry point,that ritual of manually typing basic syntax until it sticks,is effectively on life support.

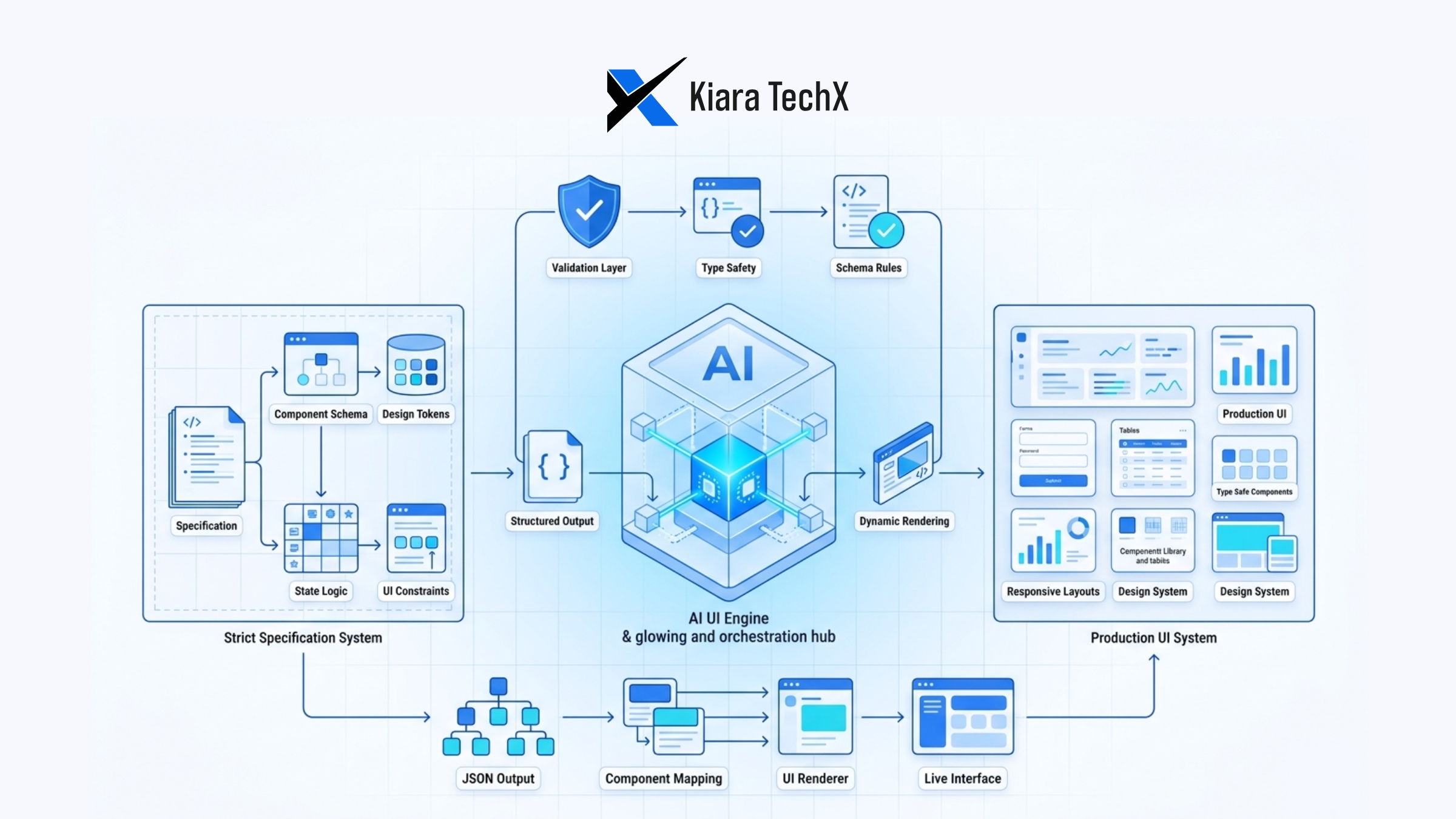

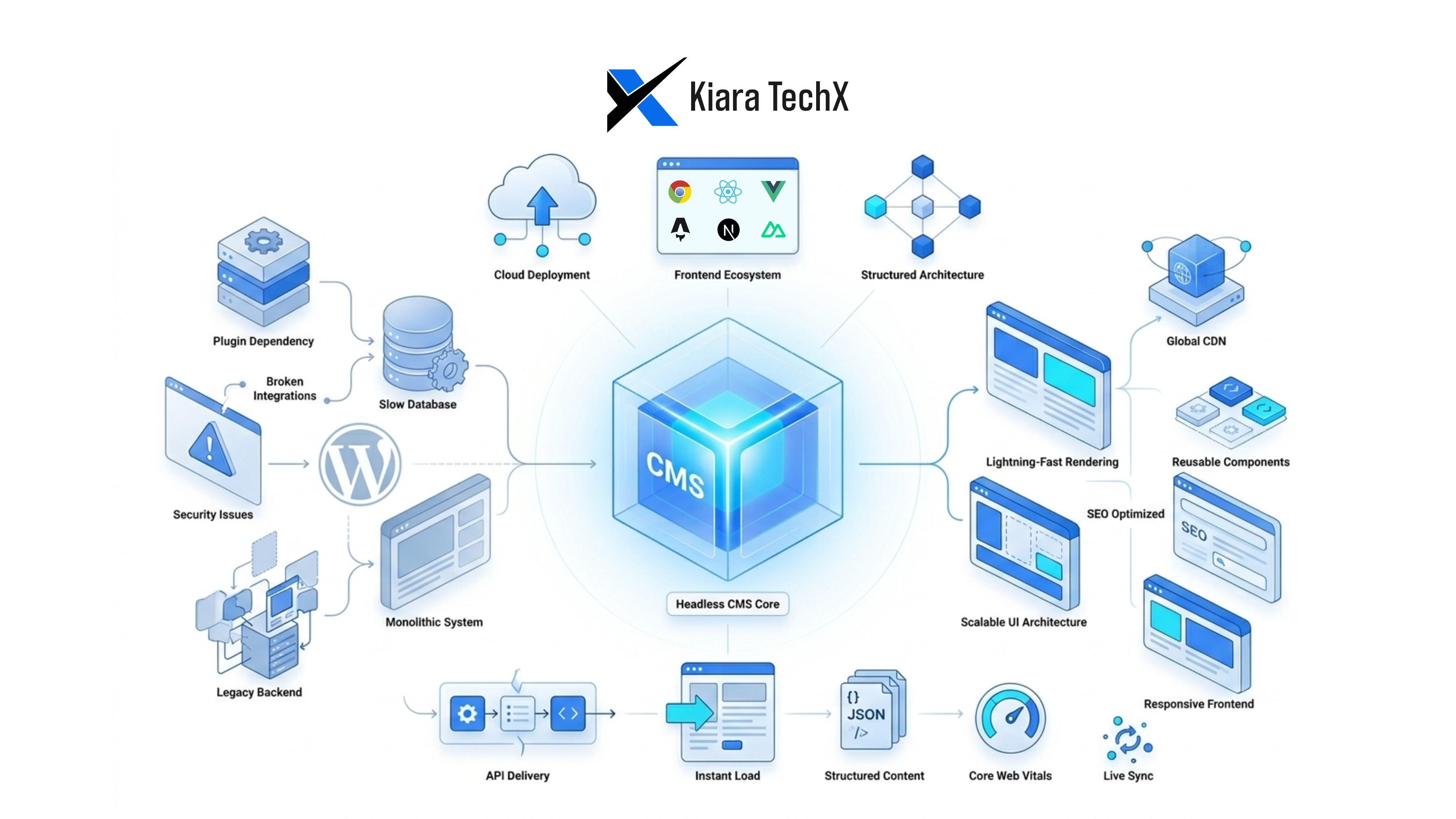

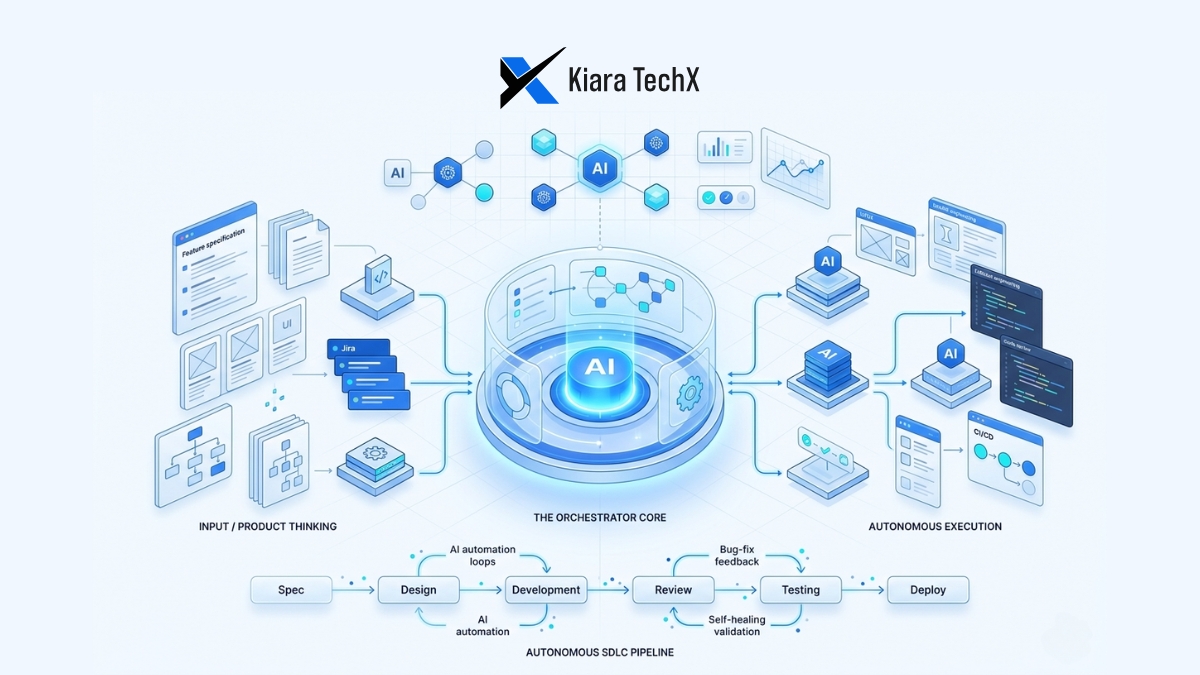

We are witnessing a fundamental shift in how human beings acquire technical skills. It isn't just about writing code faster; it's about the erosion of the "bottom-up" learning model that has defined computer science for decades. When an LLM can generate a full-stack scaffold in thirty seconds, the motivation to spend three weeks learning how to manually center a div or manage memory pointers starts to vanish. We are moving from being "scripters" to "orchestrators," and the transition is as messy as it is exciting.

The End of the Syntax Struggle

For thirty years, the barrier to entry in software development was syntax. If you missed a semicolon, your world ended. Beginners spent 80% of their time fighting the compiler and 20% solving the actual problem. Today, that ratio has flipped. AI tools have turned the "Syntax Barrier" into a "Logic Filter."

Recent data from GitHub shows that students using AI assistants complete tasks nearly 35% faster. But the speed isn't the story. The real change is in the cognitive load. By delegating the boilerplate,the repetitive structures that haven't changed since the 90s,learners are free to focus on system design and architectural thinking from day one.

We used to teach people how to swing a hammer before we showed them how to design a house. Now, we’re handing them a nail gun and asking them why the foundation is leaning. This "top-down" approach allows for faster prototyping and a much higher "dopamine hit" for new learners, which keeps them engaged longer than the dry tutorials of the past.

The Rise of "Vibe Coding"

In 2025, the term "vibe coding" moved from a Twitter meme to a legitimate industry descriptor. It describes a workflow where the developer describes a desired outcome in natural language, reviews the AI-generated output, and "nudges" the system until it matches the intended "vibe."

While setting up your initial environment is still a necessary hurdle, the actual act of writing lines of code is becoming secondary to the act of validating code.

In the traditional model, you learn by doing. In the AI model, you learn by auditing. This requires a completely different set of muscles. You don't need to remember the specific arguments for a Python library; you need to know that the library exists and how to tell if the AI is hallucinating a security vulnerability in the implementation.

The Risk of Skill Atrophy

There is, of course, a dark side to this "Easy Mode" for education. If you never struggle with the "how," do you ever truly understand the "why"? There is a growing concern among senior engineers that the next generation of developers will be "syntax-illiterate."

If the AI produces 90% of the code, and the developer only provides the final 10% of polish, what happens when the AI is wrong? We are seeing a rise in "shallow mastery," where a junior dev can build a complex application but cannot explain how the data flows between the frontend and the database without checking their prompt history.

This creates a paradox. We are more productive than ever, yet our foundational understanding is becoming more brittle. The risk isn't that AI will replace developers,it's that it will create a generation of developers who are paralyzed when the "Auto-complete" goes offline.

Shifting the Curriculum: From Coding to Systems Thinking

Because the "how" is being automated, the "what" and "why" must become the core of education. If I were designing a CS curriculum today, I wouldn't start with C++. I would start with:

- Prompt Engineering & Intent Alignment: How to specify requirements so clearly that an AI can't misinterpret them.

- Security Auditing: Learning to spot the subtle "logic bombs" AI often leaves behind.

- Systems Architecture: Understanding how components talk to each other, rather than how to write the components themselves.

- Debugging the "Black Box": What to do when the generated code works, but you have no idea how it’s doing it.

The goal is to turn learners into "Product Engineers" rather than "Code Monkeys." The value is no longer in the characters typed; it’s in the problems solved.

How Kiara TechX Approaches This

At Kiara TechX, we don’t view AI as a replacement for foundational knowledge; we view it as a high-fidelity simulator. We encourage our team to use AI tools not as a "copy-paste" engine, but as a "Socratic tutor."

Our internal mentorship programs focus heavily on Critical Code Review. We believe that if you use an AI to generate a solution, you owe the codebase an explanation of exactly how that solution works. We bridge the gap between "vibe coding" and "engineering excellence" by treating every AI output as a draft that must be defended by the human who prompted it. This ensures that while our speed increases, our collective "IQ" as a technical organization continues to grow.

While we embrace the speed of modern tools, we maintain a "Human-in-the-Loop" philosophy that prioritizes deep understanding over superficial output.

The "Hello World" era is over. The era of the "System Architect" is just beginning. To stay relevant, learners must move beyond the syntax and master the art of directing the machine.

Are you ready to evolve your development workflow? Connect with the Kiara TechX team today to learn how we integrate AI-driven efficiency without sacrificing technical depth.