Ever feel like you’re paying for a front-row seat at a concert, but the AI is spent reading the Terms and Conditions for two hours instead of playing the music? You ask a simple question about a React hook, and suddenly your agent is "exploring" 45 unrelated files in your node_modules. By the time it actually writes the code, you’ve burned through 50,000 tokens just to fix a variable name.

In 2026, the "infinite context" myth has officially met reality. While context windows have expanded to 1M+ tokens for models like Claude 3.6 Opus, our API budgets and the model's "attention" have not scaled linearly. Efficiency isn’t just about being cheap; it’s about signal-to-noise. When an agent reads 25 files to find 3 functions, the "noise" of those irrelevant files starts to degrade the quality of the "signal" it needs to solve your problem.

Why Token Management Matters in 2026

We’ve moved past the era of simple autocomplete. Today’s agents, Claude Code, GitHub Copilot’s Agent mode, and Cursor, operate with high levels of autonomy. This autonomy comes with a hidden cost: recursive context bloat. Every time an agent runs a shell command, reads a file, or catches a linter error, that output is appended to the conversation history.

If you aren't careful, a single three-hour session can easily balloon into a $20 API bill. More importantly, even the best models suffer from "lost in the middle" syndrome. They start forgetting the specific architectural constraints you mentioned in the first prompt because they’re too busy processing the 500-line stack trace from a failed test run five minutes ago.

- Cost vs. Quality: High input token usage doesn't just cost money; it slows down the "Time to Code" as the model parses your entire repo.

- Contextual Decay: The more "fluff" in the window, the more likely the agent is to hallucinate or ignore your

.eslintrcrules.

Core Strategies to Save Tokens

The most effective way to save tokens is to treat your AI agent like a highly talented but easily distracted junior developer. You wouldn't tell a new hire to "look around the office until you figure out how the database works." You’d give them a map.

- Precise Context Injection: Instead of saying "fix the bug in auth," use

@symbols or direct paths to point to the specificauthMiddleware.tsanduserModel.ts. Forcing the agent to search for the context is a token-burning ritual you can’t afford. - Persistent Project Context (CLAUDE.md / AGENTS.md): Rather than re-explaining your tech stack every time you start a session, keep a lean Markdown file in your root directory. This acts as a "Save Game" file for your project’s personality, containing only the high-signal info: core architecture, linting rules, and critical file paths.

- Task Atomicity: Break large features into small, focused prompts. Instead of asking to "build the entire checkout flow," ask to "implement the Stripe webhook handler." While setting up your framework is crucial, the real challenge is keeping each interaction scoped to a single logical change.

Tool-Specific Optimization Tips

Each major player in the agent space has specific levers you can pull to keep your token count under control.

1. Claude Code: The CLI Surgeon

Claude Code is a beast at autonomous tasks, but its terminal-based nature makes it prone to output bloat.

- The

/compactCommand: Use this proactively. Most developers wait for the "context nearly full" warning, but running/compactafter finishing a sub-task allows Claude to summarize the history and dump the raw logs, keeping its reasoning sharp. - Adaptive Thinking: In the latest 2026 updates, you can set

thinking: {type: 'adaptive'}. This allows the model to skip expensive internal "chain-of-thought" reasoning for simple tasks like file renaming, saving significant output tokens.

2. GitHub Copilot: Controlled Agency

Copilot’s Agent mode thrives on what you have open in your IDE.

- Tab Hygiene: If you have 20 tabs open, the agent is likely pulling snippets from all of them into its context. Close irrelevant files.

- Execution Plan Review: Before Copilot starts a multi-file refactor, it generates a plan. Read it! If you see "Read 10 files in

/utilsto understand naming," and you already know the naming convention, tell it: "Skip the research, use the PascalCase convention defined inSTYLE.md."

3. Cursor: Master of the @ Symbol

Cursor is the king of granular context.

@symbolvs.@file: This is the ultimate token-saver. If you only need to reference a specific interface or class, using@symbolis significantly cheaper than loading a 1,000-line file into the context window.- Indexing Management: Cursor indexes your codebase, but you should manually "Ignore" large directories like

dist,build, orlogsin your.cursorrulesto prevent the indexer from feeding useless data to the agent.

Advanced Techniques for the Power User

If you want to move from "saving pennies" to "architectural efficiency," you need to change how the agent interacts with your data.

Symbol Indexing & MCP Tool Use

Instead of letting an agent read whole files, leverage the Model Context Protocol (MCP). By using a specialized MCP server for your database or documentation, the agent can query for the specific "needle" it needs rather than swallowing the entire haystack. In 2026, many developers use the enable-experimental-mcp-cli flag, which keeps tool schemas out of the main context window until they are actually invoked.

Structured XML Prompts over Prose

Prose is expensive and ambiguous. When giving complex instructions, use XML tags like <constraints> or <context>. Models like Claude and GPT-5 process structured data more efficiently than conversational fluff, leading to fewer "I'm sorry, I didn't quite catch that" follow-up loops.

The Kiara TechX Perspective

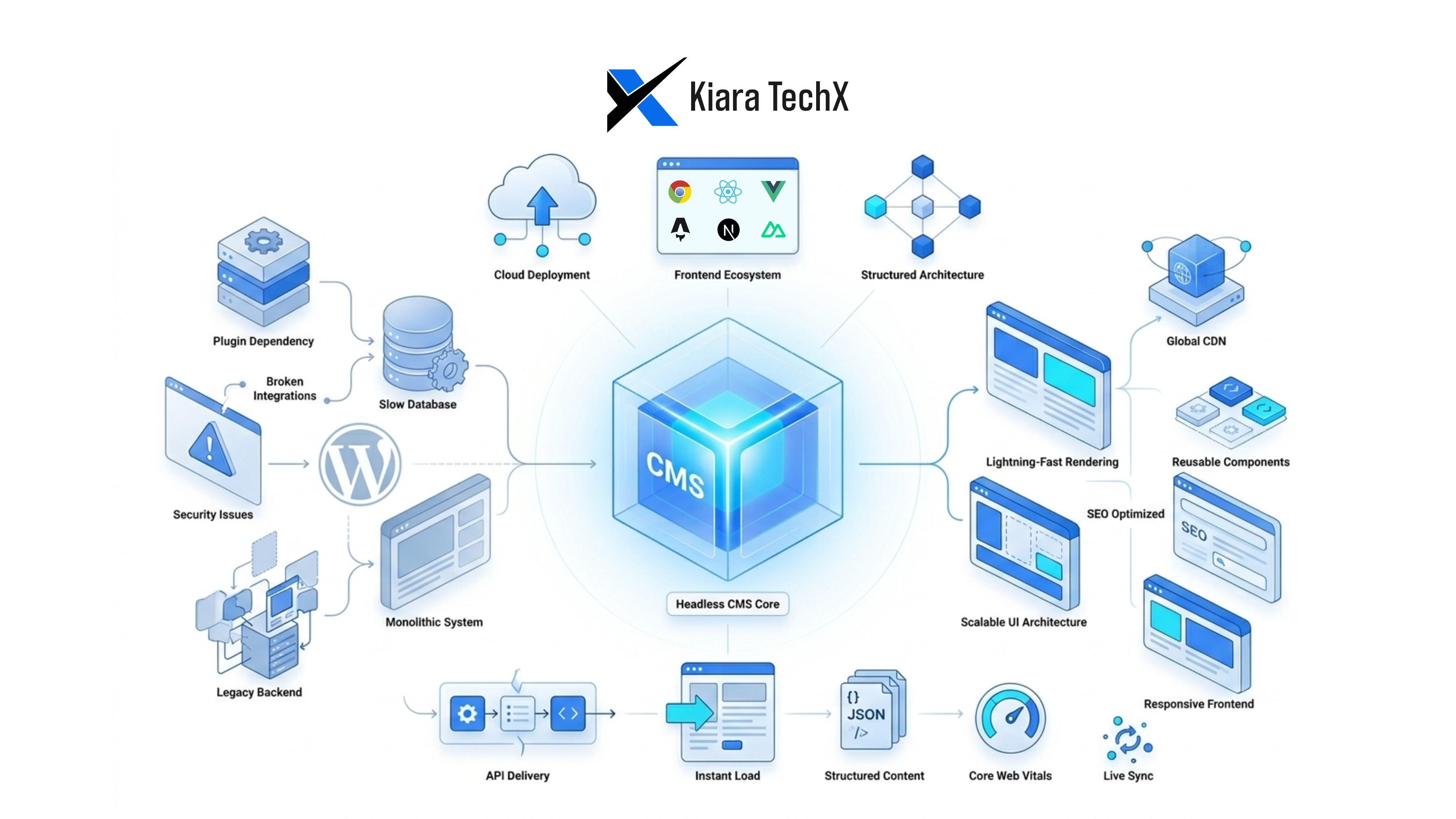

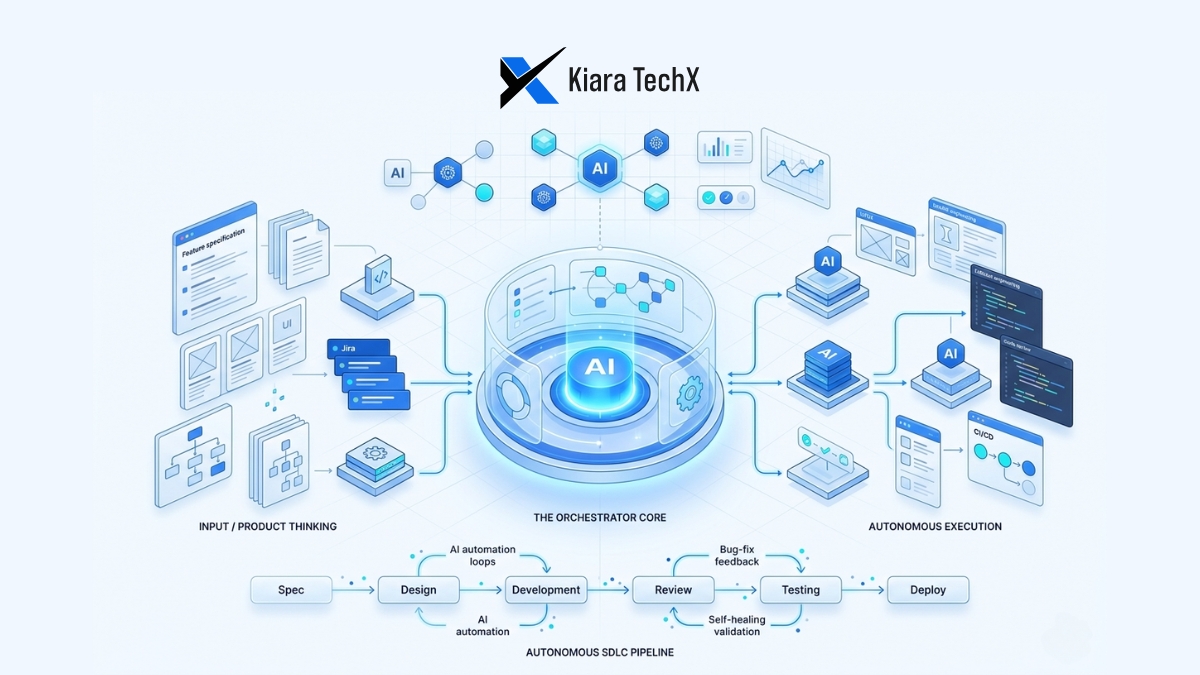

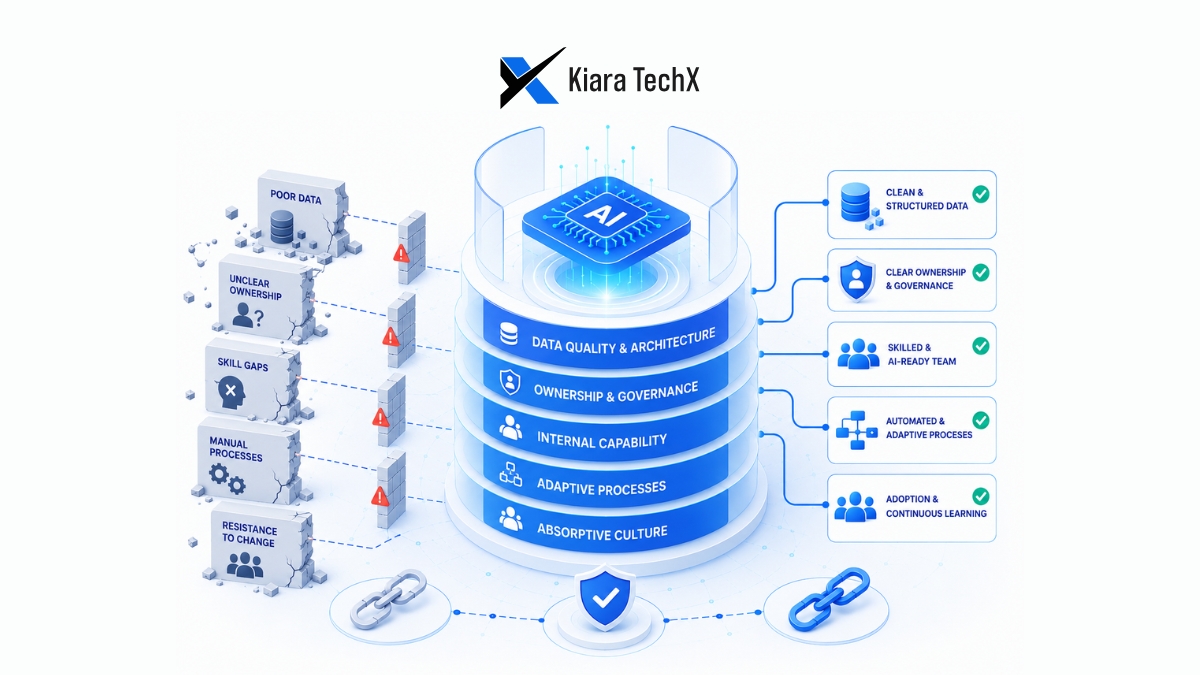

At Kiara TechX, we don't view AI agents as a "set it and forget it" solution. We approach AI integration as an engineering discipline. We’ve found that the best-performing teams are those that build "AI-Ready Codebases." This means keeping boilerplate to a minimum and using lean frameworks. If your framework requires 10 files of boilerplate for one API endpoint, you're essentially paying a "boilerplate tax" in every single AI interaction. We help our partners refactor their architectures to be "high-signal," ensuring that every token spent by an agent is spent on logic, not navigating technical debt. How we approach this involves a "Map over Territory" philosophy, ensuring the agent has a high-level architectural map so it only dives into the territory (the code) when strictly necessary.

Key Takeaway

The secret to mastering AI agents isn't having the largest context window; it’s having the cleanest one. "Give the agent the map, not the entire territory." By being surgical with your context and proactive with your tool settings, you’ll write better code faster, and your API bill will reflect your efficiency.

Ready to optimize your team's AI workflow and reduce overhead? Reach out to the Kiara TechX team to explore our custom AI-native development strategies and codebase auditing.